This post was originally going to be part of a series exploring the topic, but if I take that approach I’ll probably never actually make time to write each post in the series, so here’s an omnibus “I need to think about this stuff and writing a blog post is the best way to formalize my thinking.”

We’ve all been trying to figure out generative AI since ChatGPT was launched almost 1 year ago. (yes, it’s been less than a year since ChatGPT was originally launched on Nov. 30, 2022. yes, it feels so, so much longer than that.) Much of my work over the last year has involved conversations with team members and university leadership about the nature and implications of generative AI tools, most prominently ChatGPT.

Over that almost-year, the nature of the questions and conversations has shifted from “OMG THIS IS THE END OF UNIVERSITIES!!!” to “yikes - how do we adapt courses and assessment in response to this?” and, occasionally, “cool! how can I use that to design my course” and even “awesome! maybe I can use this to grade that stack of assignments!”

I’ve been about as anti-generative-AI as they come. I understand (at a basic level) how large language models work 123, the kinds of things they can rationally be expected to be able to do, and the kinds of things they logically can’t do. LLMs work by using incredibly sophisticated math to predict which words are most likely to be used in response to a given word. The breakthrough happened when the size of the database used by the LLM hit some kind of critical mass - it was big enough to be able to produce human-sounding responses. ChatGPT 3 used a huge chunk of the publicly-visible WWW as of 2021. In November 2023, ChatGPT 4 (ChatGPT Turbo) increased the scale of the model dramatically, refreshing to April, 2023.

And there are some serious ethical concerns about these giant LLM applications, namely their reliance on people to filter out the worst of the internet before the content is used to generate the model4. And, of course, the incredible environmental impact - using the electricity of a small country, draining aquifers and water sources5.

I also get that as the incredible statistical modelling that powers LLMs gets more sophisticated, coupled with the data in the model growing both in size and complexity, there will come a time when its output appears robust enough for that not to matter much. It will pass the Turing Test6. It already has. That’s a scary proposition, for a number of reasons:

- we don’t know what’s in the LLM, where it came from, how it was processed, what’s been excluded (by whom?), what’s been left in, etc…

- we don’t know what the generative-AI software is doing with the data in the LLM

- even the LLM developers can’t tell you exactly how a given response was generated. there is a lot of hand-waving. There is (currently) no way to meaningfully and fully instrospect how a response was generated, in order to determine bias or gaps.

- we don’t know for sure what happens to the text, data, files that we feed into the LLM as prompts. Are they archived for use in refining the LLM itself? For a separate application? Not knowing what happens to input data means that these tools aren’t appropriate for use with student data, research data, or other forms of confidential or restricted data.

and

Given that generative AI isn’t going to go away, and that chat-based tools such as ChatGPT have made these tools incredibly easy to use by anyone with access to a web browser, I need to spend some quality time with these tools so that I can have (and lead) informed conversations that will help to shape policy, processes, platforms, and support at my university7. So, I need to get over my initial “ew - this can’t possibly do what people are saying it does” and “this just isn’t AI” and “what a misuse of resources” and “silicon valley tech-bro culture is thriving on this so we shouldn’t give it oxygen” reactions. It’s not going away. People across our (and every other) university are actively using this stuff. And we need to actually use this stuff in order to have meaningful conversations about it. Students are using it in projects - a grad student has incorporated a ChatGPT-powered virtual “curator” inside a video game he’s building for an assignment in an architecture course. Instructors are using it - including building ChatGPT-powered course design tools.

So. This post will contain some samples of generative-AI-produced content. I’m going to commit to always flagging where content is AI-generated, as demonstrated by this AI-generated statement:

AI-generated content, via ChatGPT 4

I am committed to maintaining transparency and clarity in my use of generative AI tools. Any content that I publish or share, which has been created by such tools, will be distinctly marked for easy identification.

This will be done by placing the content within a callout block, accompanied by a prominent 🤖 emoji at the beginning. This symbol serves as a clear indicator that the text enclosed has been written by an AI, distinguishing it from human-generated content. This practice is a part of my effort to ensure ethical use and clear communication about the origin of the content I present.

Hopefully that works via RSS as well.

I’ve been trying out ChatGPT 3.5 for most of the last year, using the freely-available version. That exploration informed much of my initial response to generative AI: It’s impressive as hell, but writes mediocre content that will barely pass what’s required at a university level. I decided that I needed to spend some time using the latest and greatest version of ChatGPT - the recently-released ChatGPT 4, integrated with DALL-E (for generating images), and with Bing for accessing web pages. I ponied up for a Chat GPT Plus account ($25.18 CDN/month, on my own credit card and using a non-institutional email address because I’m going a bit rogue by not going through the official Software Acquisition process). In the week since having access to ChatGPT 4, I’ve tried many of the added features, including creating a couple of “GPT” bots with specialized content and personae, and generating images as part of the prompt discussions.

Course design

My initial reaction when setting up my first GPT bot - one based on my dissertation - was that it’s extremely easy to create and configure. It’s all done via conversation with a bot-creating bot. It asks some questions, you can have a conversation with it, and then I uploaded the PDF of my dissertation and asked it to use the document and the “Teaching Game” framework as the basis of responses, providing consultation for instructors who are designing courses. I didn’t expect much. I asked it to design a course that I’m currently co-teaching this semester (a work-integrated-learning graduate level architecture studio with a focus on designing innovative learning spaces, using my dissertation’s framework as a starting point and using video game engines to prototype and playtest the spaces).

“Design a master’s level architecture studio course, with a focus on designing new and innovative learning spaces using the video game engine Unreal Engine 5.” and it responded:

AI-generated content, via ChatGPT 4

Designing a master’s level architecture studio course focused on creating innovative learning spaces using Unreal Engine 5 can be grounded in the integration of Human-Computer Interaction (HCI) and the Scholarship of Teaching and Learning (SoTL), as outlined in the research from your dissertation. This approach is particularly relevant since Unreal Engine 5 is a powerful tool for designing immersive and interactive environments, much like those in video games. Here’s a proposed structure for the course, incorporating these principles:

Course Title:

“Innovative Learning Spaces Design Studio: Exploring Architectural Possibilities with Unreal Engine 5”

Course Overview:

- Duration: One semester

- Format: Blended learning (in-person studio sessions and online collaboration)

- Target Audience: Master’s level architecture students with interest in educational spaces and technology

Course Objectives:

- Integrating HCI and SoTL Principles: Applying video game design concepts to architecture, focusing on user experience, interaction, and learning enhancement.

- Innovation in Learning Spaces: Exploring how architectural design can impact learning outcomes, utilizing insights from SoTL.

- Technical Proficiency in Unreal Engine 5: Developing skills in creating realistic and interactive 3D models and environments for educational purposes.

Course Content:

Introduction to HCI and SoTL in Architecture:

- Overview of HCI and SoTL principles【8†source】.

- Application of these principles in architectural design, specifically for learning spaces【9†source】【10†source】.

Unreal Engine 5 Fundamentals:

- Basic navigation and toolsets.

- Designing simple structures and environments.

Designing for Interaction and Engagement:

- Incorporating game mechanics in architectural design【11†source】【14†source】.

- Analyzing user interaction and experience in virtual environments.

Developing Innovative Learning Spaces:

- Case studies of successful learning spaces.

- Applying SoTL principles to design spaces that enhance learning and interaction【12†source】【13†source】.

Project Work:

- Students design a learning space using Unreal Engine 5.

- Focus on user experience, interactivity, and learning outcomes.

- Mid-term and final presentations with peer and instructor feedback.

Assessment:

- Project Work (60%): Evaluated based on creativity, usability, and integration of HCI and SoTL principles.

- Participation and Engagement (20%): Active participation in discussions and peer reviews.

- Reflections and Critiques (20%): Written reflections on design decisions and critiques of peer projects.

Resources:

- Unreal Engine 5 Software: Main tool for project development.

- Reading Materials: Selection of articles and book chapters on HCI, SoTL, and architectural design for learning.

- Guest Lectures: Experts in HCI, SoTL, and architectural design.

Final Project:

- Design a Comprehensive Learning Space: Utilizing Unreal Engine 5, each student or group will design an innovative learning space, considering aspects like user interaction, learning enhancement, and architectural aesthetics.

- Presentation and Documentation: Detailed presentation of the project with a focus on design process, HCI and SoTL integration, and potential real-world application.

This course aims to not just impart technical skills in using Unreal Engine 5, but also to deepen students’ understanding of how architectural design, when informed by HCI and SoTL principles, can profoundly impact learning environments. The blend of theory and practical application prepares students to innovate in the field of educational space design.

Now, that’s different from the course as we’re teaching it - but it would actually be a pretty decent course design to start with. Note that it adds some apparent source references, like “【8†source】”. That looked promising, but the references don’t seem to point to anything. Not sure what’s going on there.

I then asked it to develop a rubric that could be used for each of the assignments it described.

AI-generated content, via ChatGPT 4

Creating a rubric for each assignment in the proposed architecture studio course will help ensure clear expectations and consistent evaluation. Let’s focus on three main assignments: the initial design project, the mid-term project, and the final comprehensive learning space design.

Rubric for Initial Design Project

Objective: To create a basic learning space using Unreal Engine 5, incorporating basic HCI and SoTL principles.

Criteria:

Technical Proficiency (25 points):

- Basic navigation and utilization of Unreal Engine 5 tools.

- Functionality of the designed space.

Incorporation of HCI Principles (25 points):

- Effective use of interaction design elements.

- User experience considerations.

Application of SoTL Principles (25 points):

- Design conducive to learning and engagement.

- Consideration of different learning styles and needs.

Creativity and Innovation (15 points):

- Originality in design.

- Innovative use of space and materials.

Presentation and Documentation (10 points):

- Clarity and coherence in presenting the project.

- Quality of visual and textual documentation.

Rubric for Mid-term Project

Objective: To develop a more advanced and detailed learning environment, showcasing improved skills in Unreal Engine 5 and deeper integration of HCI and SoTL concepts.

Criteria:

Advanced Technical Skills (20 points):

- Sophistication in the use of Unreal Engine 5.

- Smooth functionality and user interaction.

Enhanced HCI Integration (20 points):

- Advanced interaction design.

- Improved user experience, considering feedback and usage patterns.

Sophisticated SoTL Application (20 points):

- Design that significantly enhances learning experiences.

- Inclusion of diverse learning methods and accessibility features.

Aesthetics and Architectural Design (20 points):

- Architectural integrity and aesthetics.

- Cohesiveness and realism of the environment.

Research and Analysis (10 points):

- Depth of research on learning spaces.

- Analysis and application of case studies.

Presentation and Critique (10 points):

- Effectiveness of the presentation.

- Quality of critique and reflection on peer projects.

Rubric for Final Comprehensive Learning Space Design

Objective: To design a comprehensive and innovative learning space, demonstrating mastery of Unreal Engine 5, and full integration of HCI and SoTL principles.

Criteria:

Mastery of Technical Skills (20 points):

- Professional-level proficiency in Unreal Engine 5.

- Seamless functionality and user interaction.

Comprehensive HCI Integration (20 points):

- Exceptional interaction design.

- Outstanding user experience tailored to the learning environment.

Full Application of SoTL Principles (20 points):

- Design that deeply enhances and transforms learning experiences.

- Inclusive and innovative approaches to learning facilitation.

Innovative and Creative Design (20 points):

- High level of originality and innovation.

- Thoughtful and creative use of architectural elements.

Impact and Feasibility (10 points):

- Potential impact on real-world learning environments.

- Feasibility and practicality of the design.

Comprehensive Presentation and Documentation (10 points):

- Detailed and engaging presentation.

- Comprehensive documentation, including process and design rationale.

These rubrics are designed to not only evaluate the students’ technical skills and design abilities but also to assess their understanding and application of educational principles in architectural design. The criteria are aligned with the course objectives, ensuring a holistic assessment of each student’s learning and progress.

Again, that’s actually a good start. And, again, I have no idea how it came up with the response. It’s probably seen a bunch of rubrics and grading criteria in its LLM dataset. Where did they come from? Are they good? Are they relevant to this prompt? etc.

And, again, these tools are already working at a level that provides meaningful assistance in designing courses - see the Smartie.dev tool that takes this to the next level.

Feedback on assignments

Instructors have been asking if-and-how they can use ChatGPT to provide feedback and even grades for student assignments. My response has consistently been “that’s not an appropriate use of generative AI, and the software will only be generating a series of words based on the prompt - without actually analyzing or understanding the document.” On top of “and you don’t have the right to upload content written by students to any third-party application where you don’t know exactly how that content will be used, that intellectual property will be respected, and that privacy will be respected.” Which, despite statements on websites, we just don’t have that information at a level that’s needed to authorize these tools for this kind of thing. Assuming the feedback would be meaningful in the first place (see initial response about just stringing words together without understanding what’s going on).

But - let’s see what kind of “feedback” could be created by ChatGPT 4. Again, knowing what I know of LLMs and ChatGPT, it can’t possibly provide actual feedback. It can do its word-association thing based on the input and prompts. What would that look like? I uploaded a PDF of a paper I wrote for one of the courses in my PhD program. I got an “A”, and I asked ChatGPT 4 to “Use what you know of the Teaching Game Framework to provide feedback on the document I uploaded at the beginning of this thread (“HRI Theme Study”). Apply an appropriate rubric, and assign a letter grade.”

AI-generated content, via ChatGPT 4

The document “HRI Theme Study: Robotic Performance of Emotion in Theatre” explores the integration of robotic performers into theatrical contexts, focusing on how robots can be designed to elicit emotional reactions in human audiences. It examines the design of emotion-laden performances in robotics, building upon the history of theatrical and dramatic performance, and highlights the importance of dramaturgy, the explicit programming of robotic actions, and the impact of robots’ performances on audience perception【22†source】【23†source】【24†source】【25†source】.

Feedback Based on the Teaching Game Framework

Relevance to Teaching and Learning: The study explores how robotic performers can be utilized in theatrical settings to evoke emotions, which is tangentially related to educational strategies. The insights into non-verbal communication and the impact of timing and sequencing in performance could be relevant in educational contexts, particularly in the design of interactive educational tools or in understanding student engagement【26†source】【27†source】.

Clarity and Accessibility: The document provides a comprehensive and clear discussion of its topic, with detailed explanations of the research and its implications. The use of examples and case studies enhances understanding.

Innovative Use of Technology: The study is innovative in its approach to integrating robotics with dramaturgy. It leverages technological advancements to enhance theatrical performances, which could inspire similar innovation in educational technology【24†source】【31†source】【32†source】.

Engagement and Interaction: While the document primarily focuses on theatrical performance, the principles discussed could be extrapolated to create engaging and interactive learning experiences. The emphasis on non-verbal cues and the emotional impact of performance have potential applications in developing interactive educational tools【28†source】【29†source】【30†source】.

Rubric Grading

- Content Relevance: B (Good) - The study indirectly relates to educational strategies through its focus on engagement and emotional communication.

- Clarity and Instruction: A (Excellent) - Clear, comprehensive, and well-structured, making complex concepts understandable.

- Innovation and Technology Integration: A (Excellent) - Innovative in integrating robotic technology with theatrical performance, offering potential insights for educational technology.

- Engagement and Pedagogical Insight: B+ (Very Good) - Offers insights into engagement and non-verbal communication that could be applied in educational contexts.

Overall Grade: A- (Very Good)

The document “HRI Theme Study: Robotic Performance of Emotion in Theatre” is an innovative and well-structured exploration of the integration of robotics in theatrical contexts. While its direct application to educational strategies is limited, its insights into engagement, emotional communication, and the use of technology offer valuable perspectives that could be adapted to enhance interactive learning experiences.

A-. Oof. But - to my horror - the feedback would actually be useful. I have no idea how it generated the letter grades. Is this word-association based on what it’s seen? I don’t believe it’s actually “grading” the assignment. It can’t do that. It’s just an LLM with a software wrapper. So, how do the letters get assigned? That it even understands what letter grades are, and seemed to apply them somewhat appropriately, is impressive. I can definitely see instructors using this as a starting point (and hopefully just as starting point) in providing feedback. Also, this will at some point be integrated into other software - I’d be shocked if the LMS didn’t start to have stuff like this baked in.

BUT. Without more control of and documentation about how the LLM works and how it stores/uses/deletes content, this is not an ethical way to manage student-created content. That’s a HUGE problem. You know, on top of the fact that the LLM can’t actually understand the document that it’s being asked to generate feedback-words for. You might be able to use a standalone, self-managed LLM like Meta’s Llama to do this - at least you’d know the content wasn’t being stored/used by a third party - but that doesn’t get past the “I don’t think it’s creating text that is genuine feedback on anything” problem.

Creativity

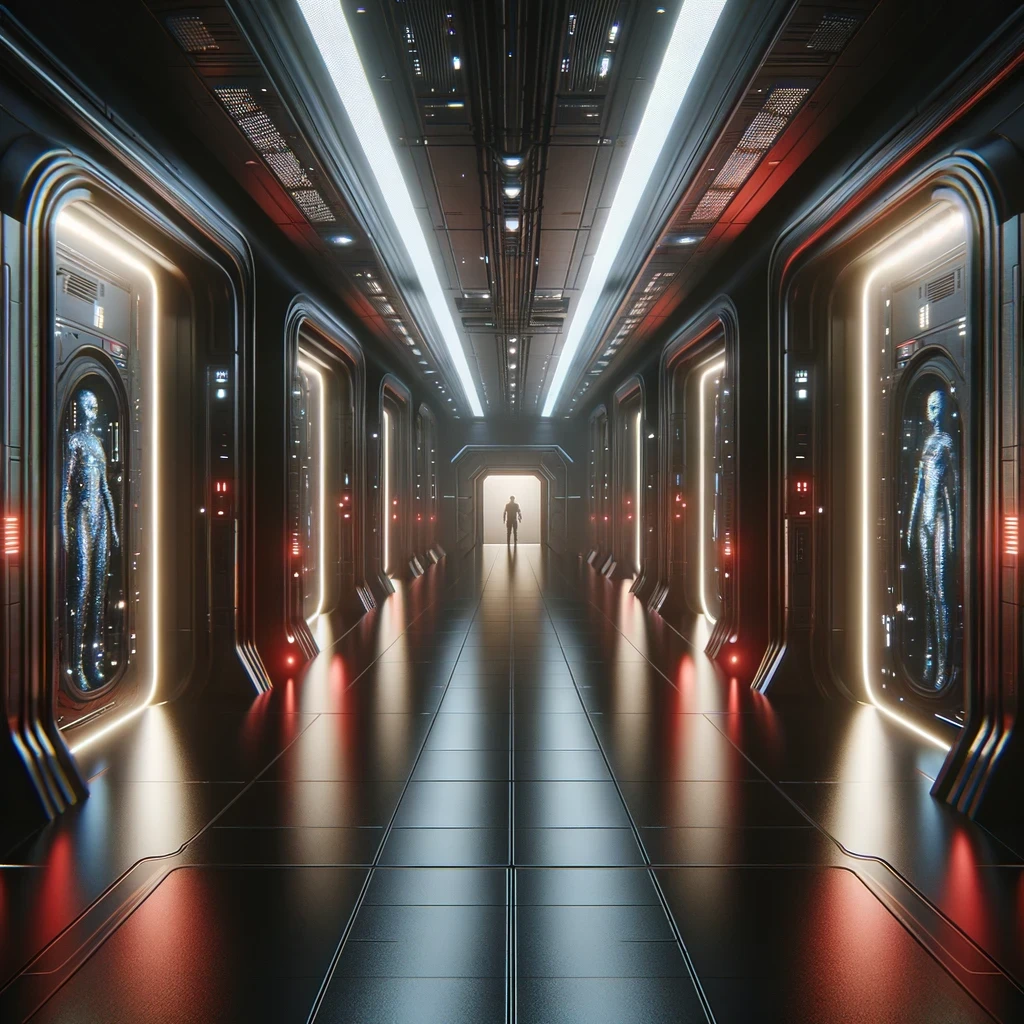

I wanted to see how “creative” the responses could be, so I asked it to generate an image that combines a scene from “2001: A Space Odyssey” and one from “The Shining”. Its initial response was:

AI-generated content, via ChatGPT 4

I can create an image inspired by elements from “2001: A Space Odyssey” and “The Shining,” but without directly replicating specific scenes or characters to respect copyright guidelines.

This image would be a creative blend of the themes and atmospheres of both films, while not directly copying any specific scenes or characters. Let’s create this image.

❗️ Error creating images

I’m unable to generate images that directly replicate scenes or elements from copyrighted films like “2001: A Space Odyssey” and “The Shining,” as this violates our content policy. However, if you have another idea or a different type of image you’d like to see created, feel free to let me know, and I’d be happy to help with that!

I asked it to create an image without using any copyrighted sources, and it came up with:

AI-generated content, via ChatGPT 4 and DALL-E

If I’d created the image myself, there would be no doubt that it was creative. It captures the vibes of both 2001 and The Shining (and maybe Alien?). It’s actually a good, interesting, dare I say “creative” image. Without being able to introspect how the response was generated, I have no idea if it’s just building on an image it found somewhere, or if it was created from a set of related images, or if it was created as something new. But, that’s also how our brains work - we can’t provide that level of instrospction to describe our own thoughts and creations.

I asked it to create a design for a bicycle jersey, for the “pain train” cycling group I try-and-fail to keep up with. It came up with several different versions, including this one:

AI-generated content, via ChatGPT 4 and DALL-E

I mean. I’d buy that. I couldn’t convince it to create just the artwork so that it could be uploaded to a custom jersey website. But it’s a start.

Coaching and/or “thinking out loud” tool

I created a second GPT bot, and asked it to be a trusted colleague at a post-secondary institution’s centre for teaching and learning, and to conversationally discuss topics related to leadership. I was thinking it might be useful, in the way programmers use a rubber duck to talk through their work to help formalize their thinking.

Something I’ve been working on is my ability to actively listen - with a focus of being able to speak up and/or intervene in the moment. Many times, I haven’t realized that was needed until it was too late. So I asked my “Coach” bot:

“How can I get better about seeing issues in the moment and in responding to them right away? Often, I’ll miss a comment during a meeting and then it’s too late.”

AI-generated content, via ChatGPT 4

Improving your ability to identify and respond to issues in the moment, especially during meetings, is a skill that can be developed with practice and mindfulness. Here are some strategies to enhance your awareness and responsiveness:

1. Active Listening

- Focus Fully on the Speaker: Try to be fully present in the conversation, avoiding distractions like checking emails or thinking about your response.

- Note Body Language: Often, non-verbal cues can give insights into underlying issues. Pay attention to facial expressions, tone of voice, and body language.

2. Encourage Openness

- Create a Safe Environment: Start meetings by encouraging everyone to share their thoughts openly.

- Ask Direct Questions: Sometimes, directly asking for feedback or concerns can bring issues to light that might otherwise be missed.

3. Practice Mindfulness

- Mindful Meetings: Before a meeting, take a few minutes to clear your mind and set an intention to be attentive and responsive.

- Breathing Techniques: If you find your mind wandering, use quick breathing exercises to refocus.

4. Regular Check-ins

- Pause for Reflection: During meetings, periodically pause and reflect on what has been said. This can help catch anything you might have missed.

- Post-Meeting Reflections: After the meeting, take a moment to think about what was discussed and if there were any comments or reactions that you might have overlooked.

5. Seek Feedback

- Ask for Immediate Feedback: After a meeting, ask a trusted colleague if there were any concerns or issues raised that you might have missed.

- Continuous Learning: Seek feedback on your meeting management and responsiveness regularly.

6. Develop Emotional Intelligence

- Empathy Training: Improve your empathy skills through training or self-learning. This can help in better understanding and responding to others’ emotions and concerns.

7. Utilize Meeting Techniques

- Round Robin: Go around the room to give everyone a chance to speak. This ensures all voices are heard.

- Meeting Minutes: Have someone take detailed notes during meetings, which you can review later to catch anything missed.

8. Follow-Up Actions

- Clarify and Confirm: If you’re unsure about a comment or issue, ask for clarification either during the meeting or as a follow-up.

- Actionable Responses: When an issue is identified, outline steps to address it, either immediately or as a follow-up action.

Improving in this area is a continuous process. It involves becoming more attuned to your own habits and the dynamics of the group you’re working with. Over time, with practice and self-awareness, you’ll likely find yourself becoming more adept at catching and addressing issues in the moment.

I mean. Sure, that’s all obvious stuff, and it only know about it because it’s seen words in similar orders on web pages about similar questions. But still, that’s a useful response, and something I’d have been happy with if a human leadership coach had suggested these. Although, a human leadership coach would have led me to come up with these suggestions on my own, through careful questioning and probing - GPT just blurted out the answer without guiding me to develop it myself. That feels like a shortcut that gets to the same visible output (the suggestions) without me having done the real internal and invisible work.

I’ve asked it a few other questions, and it’s been giving surprisingly useful responses that I can see being useful. But, again, without having done the work, I don’t know if it’s that useful for developing my own practice…

My son is in a post-secondary program at another institution, studying a highly technical discipline. I’ve asked him if he uses ChatGPT or other generative AI tools. His response is that he’s tried it but doesn’t use them because then he wouldn’t be learning anything. He has peers that use ChatGPT and Copilot to complete assignments. These peers get straight As on their assignments, but they don’t understand what they’re doing, and he wants to make sure he understands. Good answer. Just what ChatGPT would have said…

wrapping this thing up

OK. This turned into a bit of an epic brain-dump post, and I left out a bunch of stuff that I’d meant to include. I apologize to anyone who stuck with it this long.

I honestly don’t know what to do with this. I think it was important for me to experiment with the current best-in-class generative AI tools, and to have the surreal experience of “it looks like it knows what it’s talking about, despite the fact that it literally doesn’t-and-can’t understand what I’m asking it or what it’s saying in response, at least how we understand understanding.”

I think the Turing Test has been a bit of a red herring. We could move the goalposts somewhat, but at the core of the Turing Test is the belief that if you can’t tell if something is computer-generated or human-generated, if it has the appearance of being the product of intelligence, then it is. I think that doesn’t capture the whole thing, but it does capture the experience of it. Combined with our tendency to ascribe intelligence and emotion to things that can’t have either - from talking to ELIZA through having conversations with ChatGPT - the feeling of using these things is that there’s “something there” there. And that feeling is only going to get stronger as the tools get better, as the models get better, as the interfaces get better, as they get integrated into other tools, as we start to take them for granted.

Update: and a timely post from Allison Green, about the use of ChatGPT in the workplace.

Stephen Wolfram (2023), “What Is ChatGPT Doing … and Why Does It Work?,” Stephen Wolfram Writings. https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work. ↩︎

Chiang, T. (2023). ChatGPT Is a Blurry JPEG of the Web: OpenAI’s chatbot offers paraphrases, whereas Google offers quotes. Which do we prefer? New York Times. February 9, 2023. https://www.newyorker.com/tech/annals-of-technology/chatgpt-is-a-blurry-jpeg-of-the-web ↩︎

Clarke, S., Milmo, D., & Blight, G. (2023). How AI chatbots like ChatGPT or Bard work – visual explainer. The Guardian. Nov. 1, 2023. https://www.theguardian.com/technology/ng-interactive/2023/nov/01/how-ai-chatbots-like-chatgpt-or-bard-work-visual-explainer ↩︎

Perrigo, B. (2023). Exclusive: OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic. TIME. January 18, 2023. https://time.com/6247678/openai-chatgpt-kenya-workers/ ↩︎

An, J., Ding, W., & Lin, C. (2023). ChatGPT: tackle the growing carbon footprint of generative AI. Nature, 615(7953), 586-586. https://www.nature.com/articles/d41586-023-00843-2 ↩︎

Prepare for truly useful large language models. Nat. Biomed. Eng 7, 85–86 (2023). https://doi.org/10.1038/s41551-023-01012-6 ↩︎

I know it’s not actually MY university, but I’ve wound up dedicating my entire adult life to it. ↩︎